This short article consists of material from our webinar ““ Demystifying the Distributed Database Landscape .” ” You can sign up now to enjoy the webinar completely.

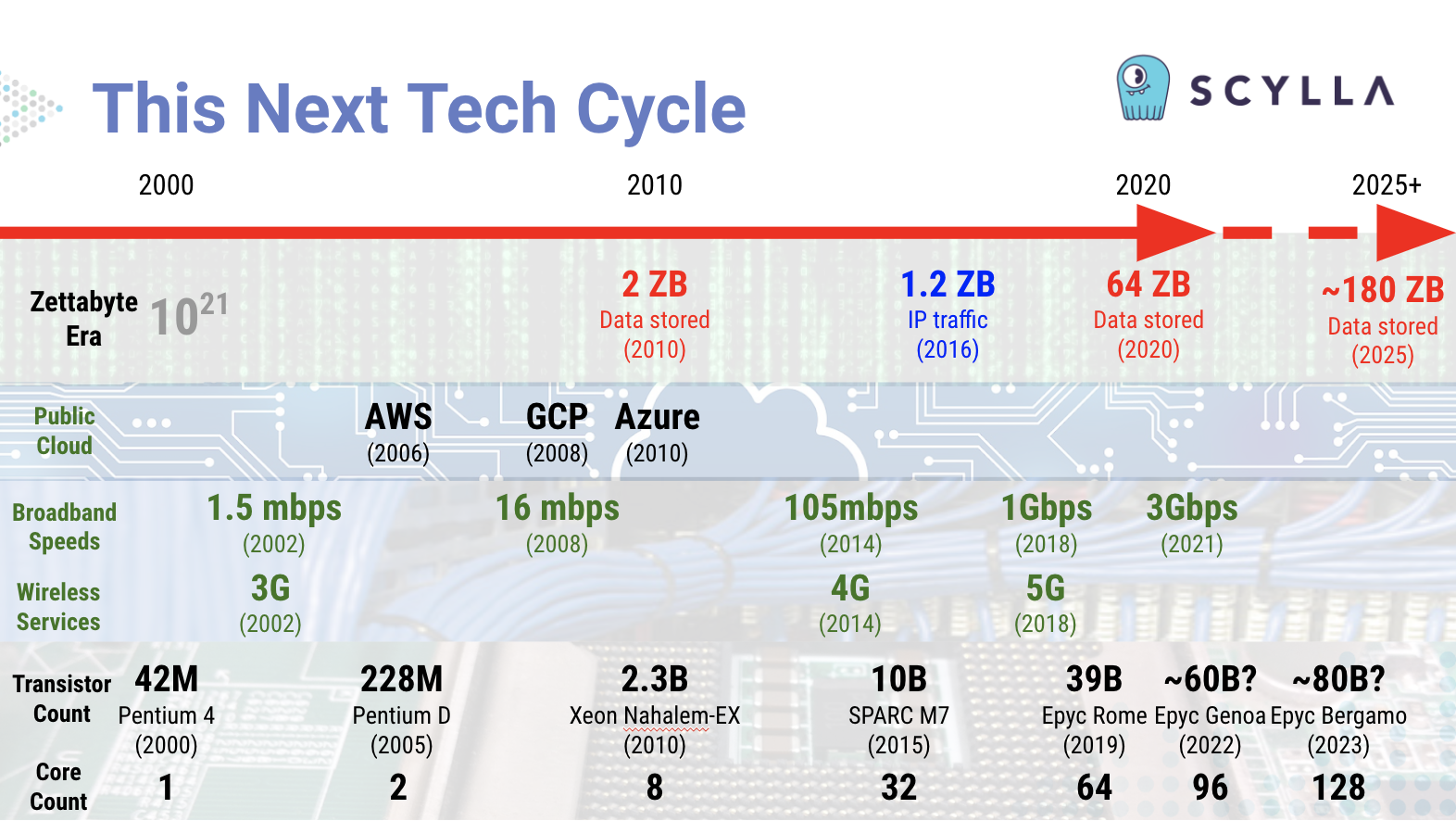

We’’ re in the middle of what we at ScyllaDB have actually called ““ This Next Tech Cycle. ” Not “ the world of” tomorrow ” nor “ the shape of things to come” ” nor “ the wave of the future. ” It ’ s currently here today. We ’ re in the thick of it. And it’’ s a wave that ’ s bring us forward from patterns that got their start previously this century.

Trends in innovation shaping this next tech cycle: overall information volumes at zettabyte-scale, the expansion of public cloud services, gigabit per 2nd broadband and cordless services, and CPU transistor and core counts continuing to grow.

Trends in innovation shaping this next tech cycle: overall information volumes at zettabyte-scale, the expansion of public cloud services, gigabit per 2nd broadband and cordless services, and CPU transistor and core counts continuing to grow.

Everything is co-evolving, from the hardware you operate on, to the languages and running systems you deal with, to the operating approaches you utilize everyday. All of those familiar innovations and organization designs are themselves going through advanced modification.

This next tech cycle goes far beyond ““ Big Data. ” We ’ re talking substantial information. Invite to the ““ Zettabyte Era . ” This period, depending upon who is specifying it, either begun in 2010 for overall information kept in the world, or in 2016 for overall Internet procedure traffic in a year.

.

Right now private information extensive corporations are producing details at the rate of petabytes daily, and saving exabytes in overall. There are some prognosticators who think we ’ ll see mankind, our computing systems, and our IoT-enabled equipment creating a half a zettabyte of information each day by 2025.

.

Yet alternatively, we ’ re likewise seeing the value of little information. Take a look at the genomics transformation.

.

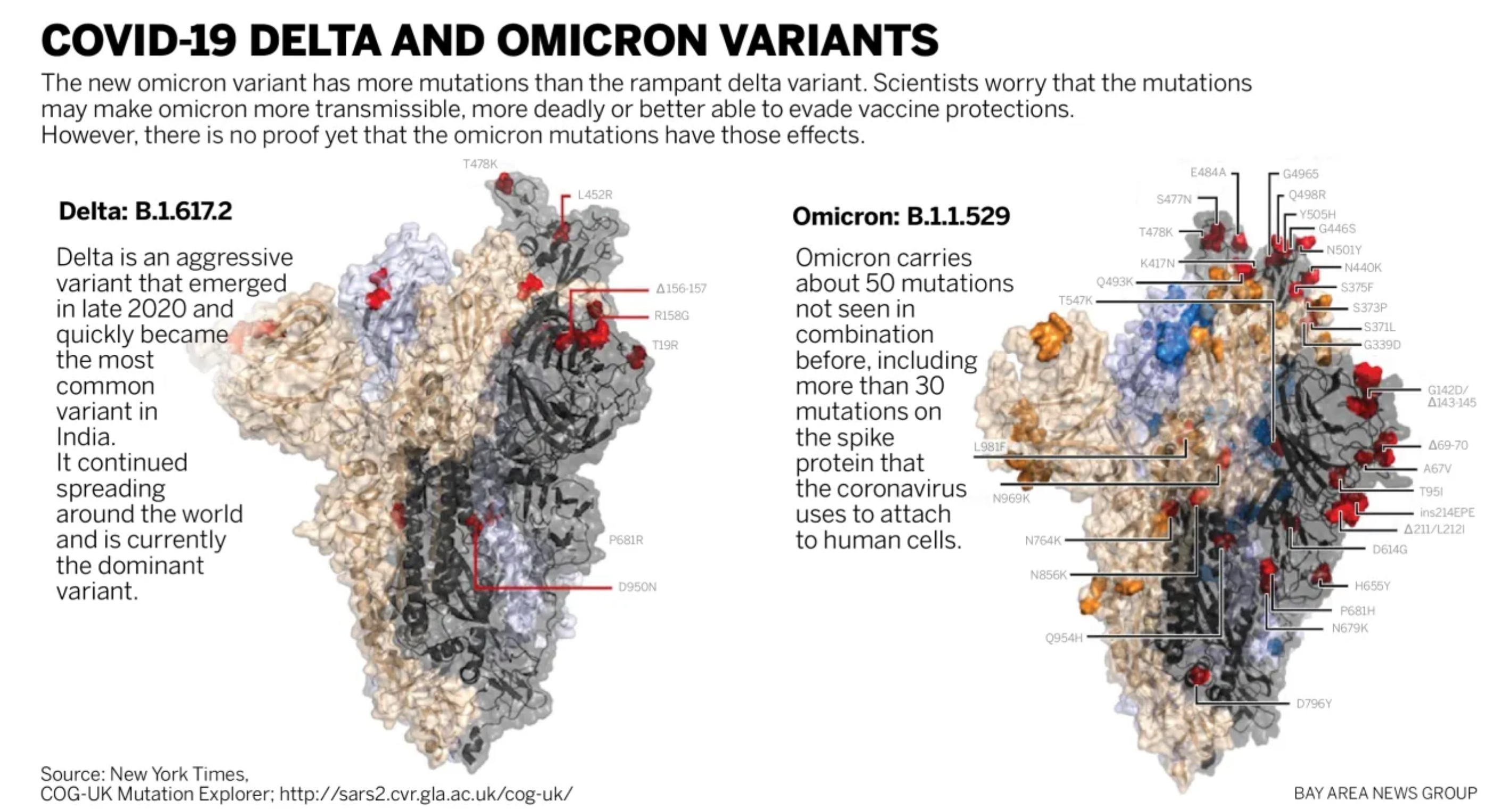

Because the RNA gene series of, state, COVID-19 is really not huge data-wise — you can keep it in less than 100kb– less than an ancient floppy. It is significantly essential to comprehend every single byte — of that details, since immunizing versus this international pandemic needs comprehending every modification of that rapidly-evolving pathogen.

.

.

Spike proteins of the Delta and Omicron COVID-19 variations, revealing the brand-new anomalies in the viral pathogen.Simply understanding that there are distinctions does not imply researchers yet comprehend the pragmatics of what each distinction indicates or does for human health. Source: New York Times/ Bay Area News Group

.

So this next tech cycle needs to scale whatever in between little information and big information systems. And the database you will utilize, and the information analytics you carry out, requires to line up with the volume, range and speed of the information you have under management.

. “ Great! Now Make it All Multi-Cloud! ”.

Also, this next tech cycle is not simply the “ cloud computing cycle. ” AWS released in 2006. Google Cloud introduced in 2008. And Azure officially released in 2010.” We ’ re currently well over a years past the dawn of the public cloud. This next tech cycle’absolutely constructs on the environments, approaches and innovations these hyperscalers supply.

.

So the database you utilize likewise needs to line up with where you require to release it. Does it just operate in the cloud, or can it be released on properties far behind your firewall software? Does it simply deal with one cloud supplier, or is it deployable to any of them? Or all of them all at once? These are necessary concerns.

.

Just as we do not wish to be locked into old methods of doing and believing, the market does not wish to be locked into anyone innovation service provider.

.

So if you ’ ve simply been mastering the art of running stateful dispersed databases on a single cloud utilizing Kubernetes,now you’’ re being asked to do everything over once again, now just in a hybrid or multi-cloud environment utilizing Anthos, OpenShift, Tanzu, EKS Anywhere or Azure Arc.

.

Computing Beyond Moore’’ s Law.

Computing Beyond Moore’’ s Law.

Underpinning all of this are the raw abilities of silicon, summarized by the transistor and core counts of present generation CPUs. We’’ ve currently reached 64-core CPUs. The next generations will double that, to a point where a single CPU will have more than 100 processors. Fill a rack based high efficiency computer system with those and you can quickly enter into countless cores per server.

And all of this is simply conventional CPU-based computing. You likewise have GPU developments that are powering the world of dispersed journal innovations like blockchain. Plus all of this is occurring simultaneously as IBM prepares to provide a 1,000 qubit quantum computer system in 2023, and Google prepares to provide a computer system with 1 million qubits by 2029.

This next tech cycle is powered by all of these basically advanced abilities. It’’ s what ’ s allowing real-time complete streaming information from anybody to anywhere. And this is simply the facilities.

If you dive deeper into that facilities, you understand that each of the hardware architecture traffic jam points is undergoing its own transformation.

We’’ ve currently seen CPU densities growing. Vanilla standalone CPUs themselves are likewise providing method to complete Systems on a Chip (or SoCs).

And while they’’ ve been utilized in high efficiency computing in the past, anticipate to see commonly-available server systems with higher than 1,000 CPUs. These will be the workhorses — — or more appropriately the warhorses —– of this next generation: Huge monsters efficient in bring magnificent work.

Memory, another timeless traffic jam, is getting a big increase from DDR5 today and DDR6 in simply a couple of years. Densities are increasing so you can anticipate to see warhorse systems with a complete terabyte of RAM. These and bigger scales are going to be significantly typical —– and for organizations, significantly economical.

Storage is likewise seeing its own transformation with the recently-approved NVMe base and transportation requirements, which will make it possible for a lot easier execution of NVMe over materials.

It’’ s likewise not the standard broadband or cordless Internet transformations. We’’ re totally 20 years into both of those. The arrival of gigabit broadband and the brand-new varied variety of 5G services —– likewise capable of scaling to a gigabit —– allow unbelievable brand-new chances in genuine time information streaming services, IoT and more. And in the datacenter? You’re speaking about the stable march towards real terabit ethernet —– previously this year, the IEEE passed a modification to support rates of approximately 400 Gbps.

So how does your database work when you require to link to systems far and near? How crucial are the restrictions of the speed of light to your latencies? How well do you handle information consumed from numerous countless endpoints at several gigabits per 2nd scales?

Now, software application will have to play capture up to these abilities. Simply as it took some time for kernels and after that applications within a vertically scaled box to be made async all over, sharded-per-core, shared-nothing, and numa-aware, this next tech cycle is going to need systems to adjust to entire brand-new methods of getting the most from these brand-new hardware abilities. We will require to reassess numerous fundamental software application presumptions .

.Developing Methodologies: Agile and Onwards.

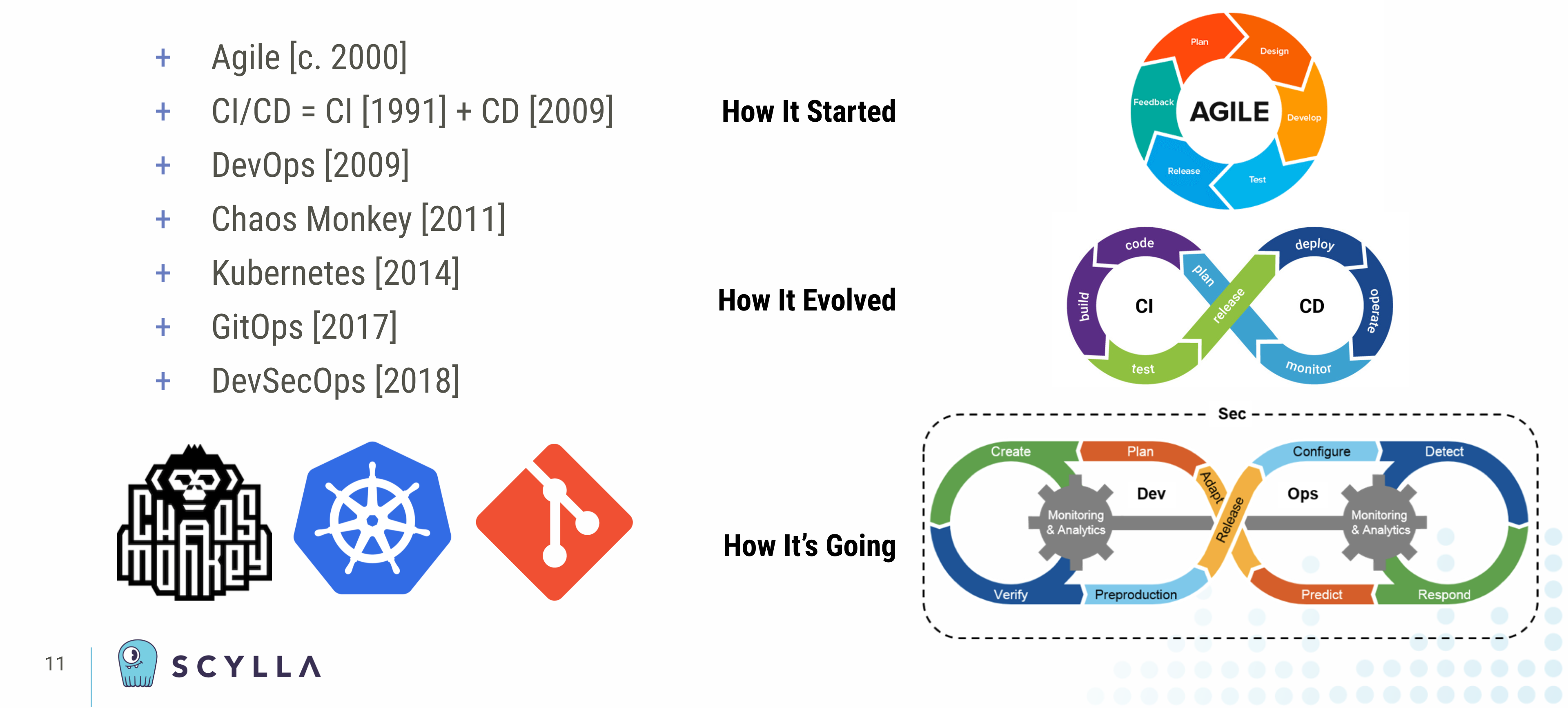

Speaking of methods, simply take a look at these from the dawn of the millennium and onwards. As a market we’’ ve moved from batch operations and monolithic upgrades carried out with multi-hour windows of downtime on the weekend to a world of streaming information and constant software application shipment carried out 24 × × 7 × 365 with no downtime ever.

And by embracing to the cloud and this always-on world we’’ ve exposed ourselves and our companies to a world of random turmoil and security hazards. We now need to run fleets of servers autonomously and manage them throughout on-premises, edge and several public cloud supplier environments.

While Scrum has actually been around given that the 1980s, and Continuous Integration because 1991, in this century the twelve concepts of the Agile Manifesto in 2001 modified the extremely viewpoint —– never ever mind the approaches —– underlying the method software application is established.

The Agile Manifesto’’ s really initially line speak about the greatest concern being ““ to please the client through constant and early shipment of important software application.””

.

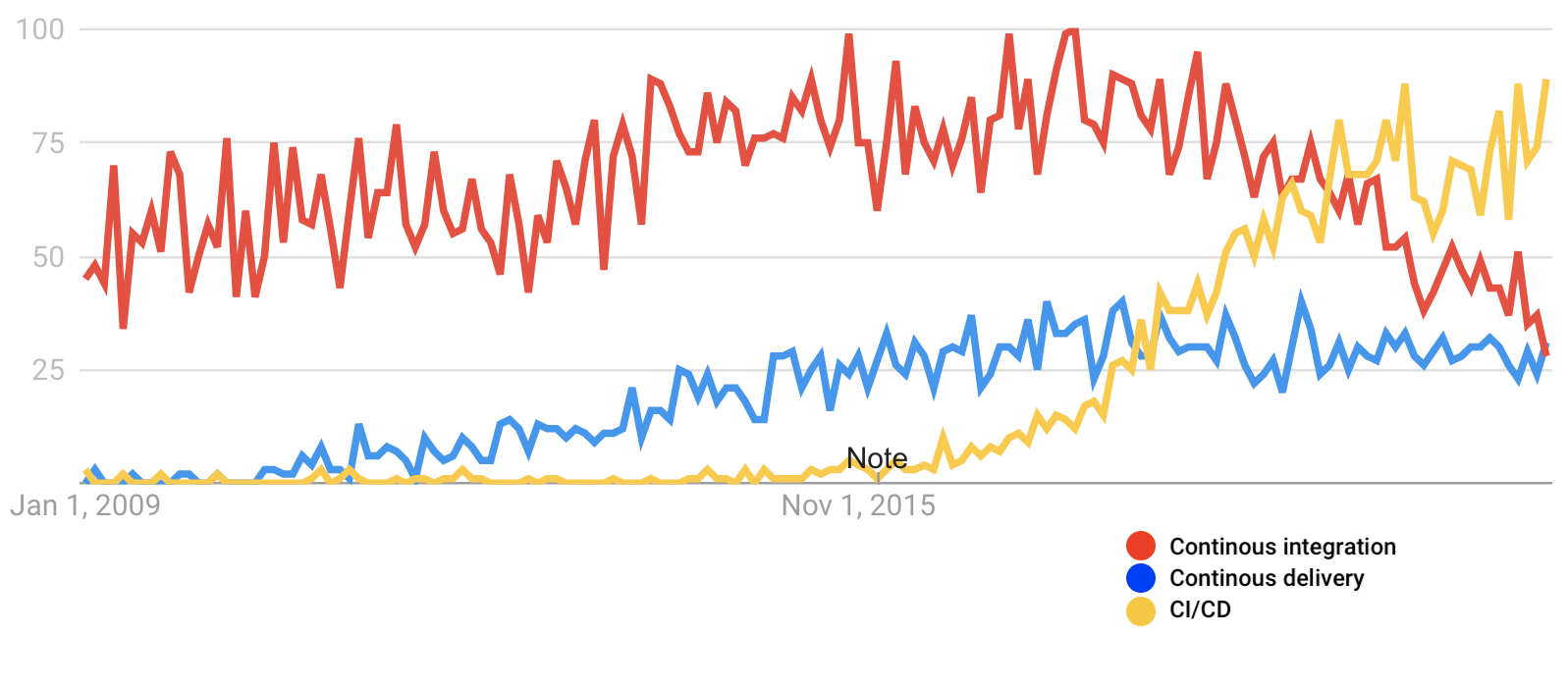

However the particular term Continuous Delivery (CD), as we understand it today, didn’’ t take hold up until 2009. It was then signed up with at the hip to Continuous Integration and accompanied the birth of what we now referred to as DevOps.

With that, you had a structure for specifying change-oriented procedures and software application life process through a responsive designer culture that now, a years or 2 into this transformation, everybody takes as an offered.

Graph demonstrating how Continuous Integration (CI) and Continuous Delivery (CD) developed individually. They were ultimately adjoined by the term ““ CI/CD, ” whose appeal as a search term just started to increase c. 2016 and did not displace the 2 different terms up until early in 2020. It is now progressively uncommon to describe ““ CI” ” or “ CD ” independently. Source: Google Trends

.

Onto that standard were developed systems and tools and viewpoints that extended those essential concepts.The Chaos Monkeys of the world, along with the pentesters, wish to break your system– or burglarize them– to reveal problems and defects long prior to something devastating and stochastic or somebody maliciously does it for you.

—.

Cloud native innovations like Kubernetes and single source of fact for facilities approaches like GitOps were developed out of the large need to scale systems to the numerous countless production software application implementations under management.

. DevSecOps.

And it ’ s inadequate. We ’ ve currently seen software application supply chain attacks with SolarWinds, low level system attacks like Spectre, Meltdown and Zombieload , or human-factor risks like viral deep phonies and countless fictitious social networks accounts utilizing profile images produced by Generative Adversarial Networks, never ever mind countless IoT-enabled gadgets being nefariously utilized for Distributed Denial of Service botnet attacks. Plus, simply recently news broke of the vulnerability of log4j — our finest dreams to anybody patching code this holiday.

.

These are all simply the bowshocks of what ’ s to come.

.

So now your AI-powered security systems are secured battle every day in genuine — time versus the hazard stars trying to weaken your typical operations. We understand this due to the fact that a growing variety of invasion avoidance and malware analysis systems are developed at terabyte-and-beyond scale utilizing dispersed databases like ScyllaDB as their underlying storage engines.

.

Hence nowadays, instead of simply “ DevOps, ” significantly we speak about “ DevSecOps ”– due to the fact that security can not be an afterthought.Not even for your MVP. Not in 2021.

.

And these approaches are continuing to develop.

. Summary.

This next tech cycle is currently upon us. You can feel it in the very same method you ’d desire a significant life or profession modification. Perhaps it ’ s a programs language rebase. Is it time to reword a few of your core code as Rust? Or perhaps it ’ s in the method you are thinking about repatriating specific cloud work, or extending your preferred cloud services on-premises such as through an AWS Outpost . Or possibly you ’ re in fact’aiming to move information to the edge? Preparation for a huge fan-out? Whatever this transformation indicates to you, you ’ ll requirement facilities that ’ s readily available today in a rock-solid kind to take work into production today, however versatile sufficient to keep growing with your emerging, progressing requirements.

.

We ’ ve developed ScyllaDB to be that database for you. Whether you wish to download Scylla Open Source , register for Scylla Cloud , or take Scylla Enterprise for a trial , you have your option of where and how to release a production-ready dispersed database for this next tech cycle.

.

.

Scylla Open Source. DOWNLOAD NOW

.

.

.

.

Scylla Enterprise. START A FREE TRIAL

. Discover more at Scylla Summit 2022.

To find more about how ScyllaDB is the right database for this next tech cycle, we welcome you to join us at Scylla Summit 2022 , this coming 09-10 February 2022. You ’ ll have the ability to become aware of the current functions and abilities of ScyllaDB, along with hear how ScyllaDB is being released to manage a few of the most difficult usage cases in the world. Discover out how ScyllaDB can be right at the whipping heart of it if you are constructing your own information facilities for this next tech cycle.

.

REGISTER NOW FOR SCYLLA SUMMIT 2022

.

The post Defining This Next Tech Cycle appeared initially on ScyllaDB .

Read more: scylladb.com